Learn Agentic AI in the most entertaining and disciplined way

What is Dynamic Memory Sparsification (DMS)

If you’re running LLMs in production, you’ve probably seen this:

- Models slowing down when reasoning gets deep

- Fewer users per server than expected

- GPU bills rising fast

The problem isn’t always compute.

It’s memory.

When models “think” step by step, they store every reasoning token in GPU memory (KV cache).

The longer they think, the larger this cache becomes.

Memory grows linearly. Costs grow with it.

So teams face a trade-off:

Let the model think longer → better accuracy → higher infra cost

Limit thinking → lower cost → weaker performance

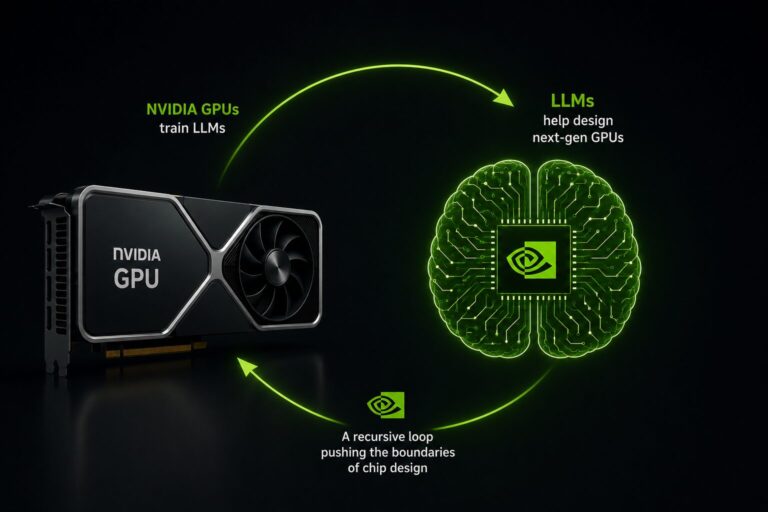

Nvidia researchers introduced something interesting: Dynamic Memory Sparsification (DMS).

Instead of keeping every token, the model learns what to keep and what to evict, intelligently.

Even better, it doesn’t delete things immediately. It briefly keeps “maybe useful” tokens before removing them. That avoids hurting reasoning quality.

How?

They add a small decision layer inside the attention mechanism. For every token, the model also predicts whether that token will matter for future reasoning.

During training:

- Most of the model is frozen.

- Only this “memory policy” is trained.

- If deleting a token changes the final answer, the model is penalized.

So it gradually learns:

“If removing this breaks my reasoning → keep it.”

“If nothing changes → it’s redundant.”

There’s also a safety mechanism called delayed eviction. Tokens aren’t deleted instantly — they’re kept briefly so the model can absorb any remaining signal before removal.

The impact?

• Up to 8× lower memory cost

• Up to 5× higher throughput

• In some cases, better reasoning under the same budget

That’s not a small optimization.

That changes how many customers one GPU can serve.

As AI systems move from simple chat to multi-step reasoning agents, memory — not compute — may become your biggest constraint.

Worth watching closely.

Learn AI Agents through entertaining web series, and not lecture-style video

Like us, if you also hate learning through lectures then we invite you to watch our engaging educational web series.

You can explore the courses here: https://www.tisdoms.com/

If you have questions, feedback, or disagree with something in this article, I’d love to hear your perspective. Connect with me on LinkedIn:

https://www.linkedin.com/in/nikhileshtayal/

Common questions about the programs are answered here:

https://www.tisdoms.com/faqs-tisdoms-an-edu-tain-tech-platform-to-learn-ai/